Live streaming is an exciting way to engage audiences all over the world. In fact, the medium’s popularity is growing at an exponential rate. But if you’re new to the world of real-time streaming, getting used to the lingo can be an adjustment. While some words may sound familiar to filmmaking veterans, they take on a new meaning in the context of going live.

Today, we’re sharing a list of key terms you’ll need to know to get your event up and running. Ready to get started?

1. Audio visual sync

Audio visual sync refers to the relationship between the audio and video tracks of a live broadcast. If the sync is off, the video will be ahead of the audio, or vice versa. Checking sync is important before going live, because it’s one of the most frustrating technical problems for viewers during a live event.

2. Backhaul

Backhaul is content that’s recorded live, but isn’t broadcasted. For example, if a basketball game goes to a commercial break, the cameras will continue rolling even though the viewers are watching commercials (or a slate). Backhaul content is seen in a producers’ software, but it typically isn’t shown to viewers. If backhaul content is appearing to your viewers, it could indicate a technical problem in the broadcast software.

3. Bitrate

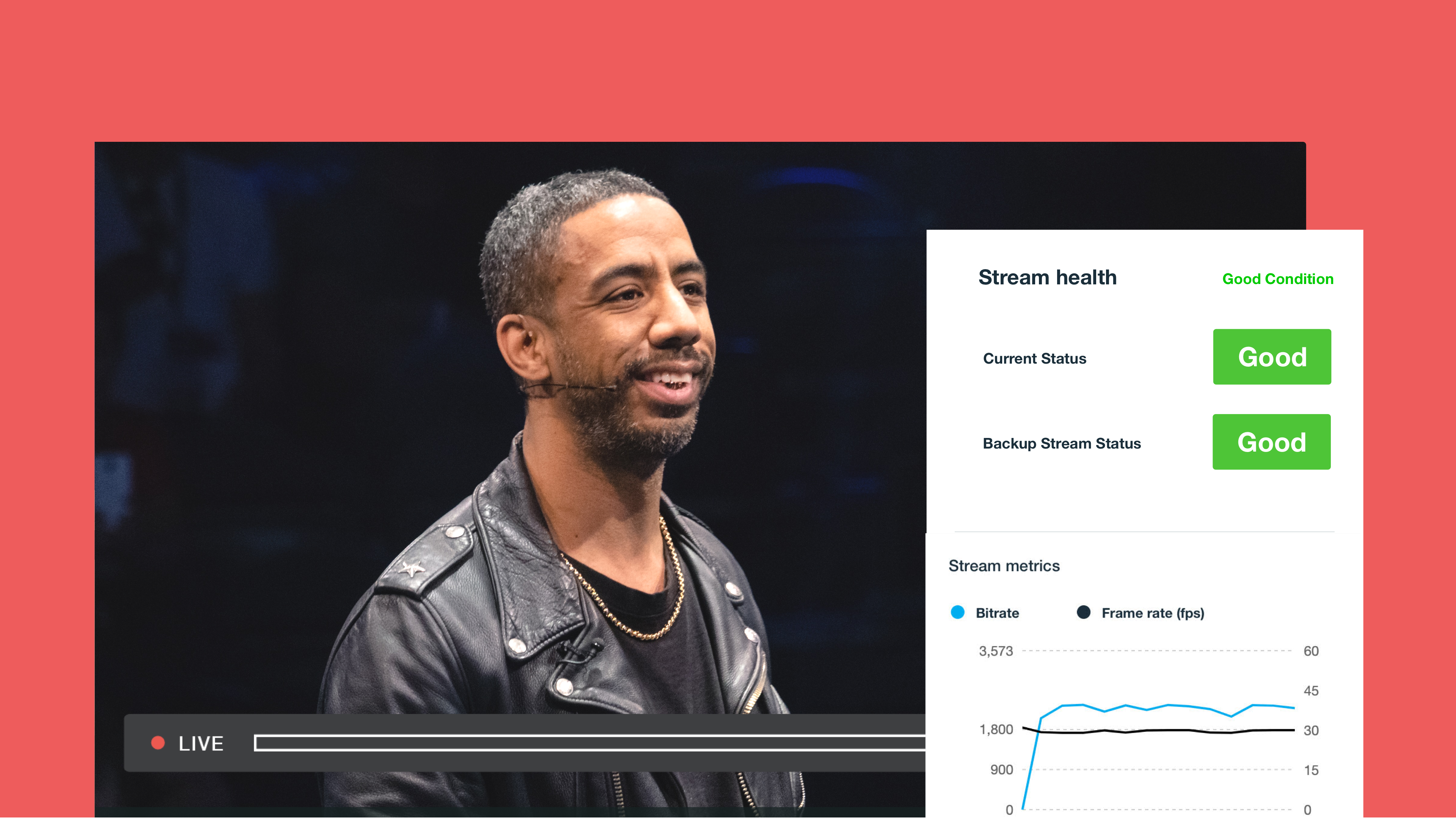

Bitrate is the rate at which data is transported from point A to point B. It’s typically measured in kilobytes per second, and can vary based on a variety of factors, including: source and receiver network connections, video compression, resolution, and more.

4. Broadcaster

A broadcaster is what you’d call the client(s) who are delivering media to the live backend.

5. Bugs

Bugs are a media term for graphical elements overlaid on top of a stream with a switcher. One example would be the logo of a brand.

6. Chat

Chat features allow live stream viewers to post comments that run in real time alongside the video player. This is an excellent way for viewers to communicate with the on-camera personnel, and with each other. Live chat is one of the defining elements of live streaming as a medium. And it’s included in Vimeo Live.

7. Chopping

Chopping is when you clip recorded live media. This serves the purpose of ending the test period at the beginning of the live stream, so viewers don’t join the stream in a DVR scenario.

8. Cloud transcoding

Cloud transcoding means utilizing a network to provision encoding machines the moment they are requested, as opposed to having dedicated machines waiting unused.

9. Compression

In the context of live streaming, compression increases processing efficiency by decreasing the overall size of the streaming video. The industry standard is H.264 (better known as MPEG-4). Vimeo uses this compression for both our uploaded and live videos.

10. Content delivery network (CDN)

A content delivery network consists of global servers that allow users to access websites from a machines that are geographically local. For example, if a user in Hong Kong wants to check your England-based site, they’ll automatically connect to the CDN machine in Hong Kong, which has a copy of all information accessible on the server in England. CDNs greatly improve network connection and user experience by shortening the cable run.

11. Delay

Depending on the type of live stream you’re running, you may choose to include an intentional delay on the production side. Delays give you the ability to cut out before something sensitive hits end viewers. It also lets you insert lower thirds throughout the stream, or censor mature language.

12. Delta frames

Delta frames are the frames that follow key frames in the group of pictures sequence (see #19 for more on GOP sequences). These frames are easier for machines to process because they use prediction algorithms to pre-load where objects will be in their next frame, based on information from other frames. This process is known as inter frame prediction (see #24 for more). There are two types of delta frames: Predictive frames (aka P-frames), which use only elements from previous frames to predict motion, and use that information as a processing shortcut. Bi-directional frames (B-frames), on the other hand, use elements from previous and upcoming frames to predict motion, and use that information as a processing shortcut. B-frames are more versatile than P-frames, but they take slightly longer to process.

13. Downlink

Downlink is the connection from a satellite back to the specific encoding site (or full production studio).

14. Dropped frames

Dropped frames signify the loss of video frames during the encoding/compression stage. This occurs when more data is transferred than the machine’s CPU can process. The result is a live stream that looks like it’s skipping, with audio that falls further out of sync with each dropped frame. Restarting your machine is usually the way to correct this issue, so the best protocol is to have a backup machine on hand just in case. If restarting your machine doesn’t work, the issue could be incorrect encoder settings.

15. DVR

With a DVR, live content can be played back anywhere within a predetermined timeline.

16. Encoding / transcoding

When video is first recorded, it exists in one of a variety of formats depending on your equipment. Encoding — sometimes called transcoding — is the process of converting raw, analog, or broadcast video files to digital video files.

17. Fiber

A fiber is a fast and reliable solution for transmitting a live stream from an event source to an encoder destination. For large scale events, live stream production crews rent fiber in the ground; in these cases, it’s also often used as a redundant source (see #32) with satellite.

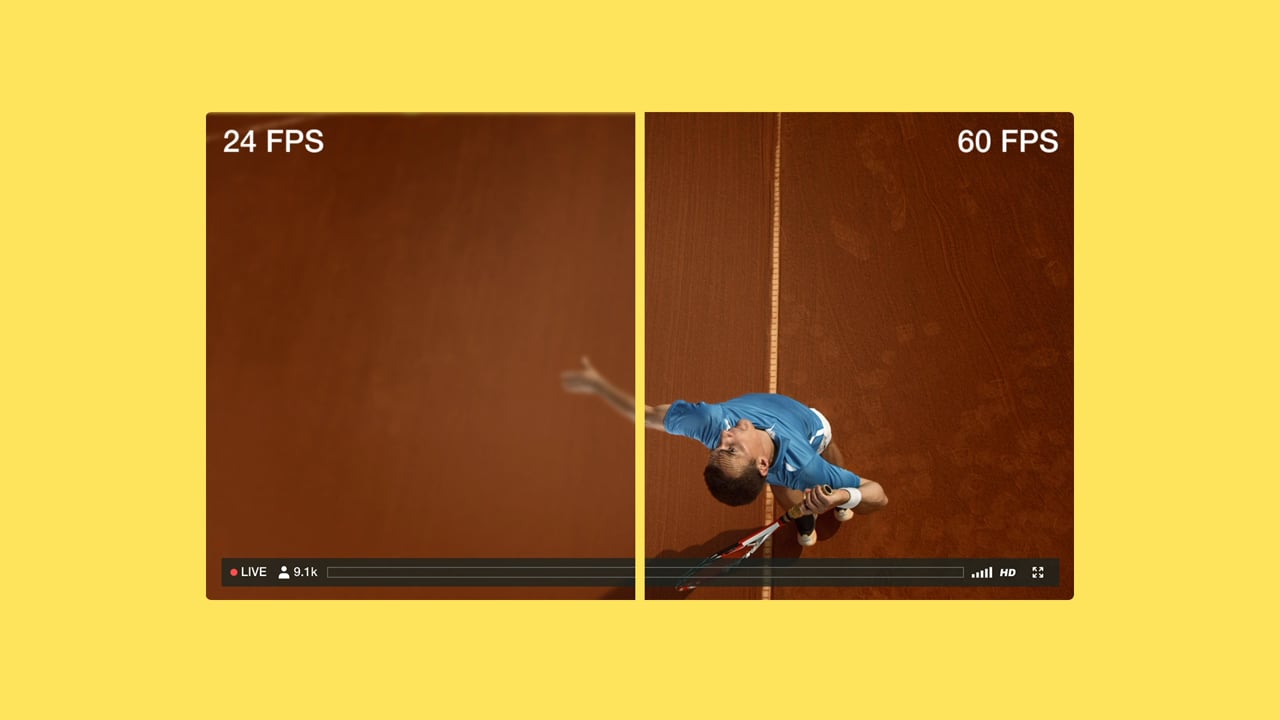

18. Frames

Frames are the series of still images that make up your video. Think of these like the pages of a flip book. The less motion per frame and the more frames per second, the smoother the video. Frames are measured in frames per second (FPS), aka the number of frames displayed per second of video.

19. Frame rate

Frame rate directly affects compression, and is one of the main factors in encoder settings. The two most common frame rates are 30 FPS and 60 FPS.

20. Group of pictures sequence (GOP sequence)

A group of pictures sequence, or GOP sequence, is the typical structure of video frames compressed using inter frame prediction (#24).

21. Hardware encoder

Hardware encoders are computers dedicated to encoding and processing video. They offer optimized performance and come preloaded with broadcast software.

22. Hot mics

Hot mics are microphones that are live and recording while your event is on a break. (Like sporting event commentators talking to each other during a commercial break.) This is something to watch out for, since the speakers assume they’re not live. The best way to troubleshoot this issue is to have your producer deploy a slate over your live stream.

23. Ingest

Ingesting is the act of consuming video from a broadcaster in the live backend.

24. Ingest point

The ingest point is the point at which a streaming server begins receiving a signal from the provisioned broadcast.

25. Inter frame prediction

Inter frame prediction is a method of video compression that utilizes existing frames’ shared elements to predict and pre-load the same elements in future frames. This process greatly increases compression rates and allows us to process more video per second than would otherwise be possible. Think of this as copying and pasting objects from one page to another, instead of drawing them over and over.

26. IP camera

An internet protocol camera, or IP camera, is a type of digital video camera commonly employed for surveillance. Unlike analog closed circuit television (CCTV) cameras, IP cameras can send and receive data via a computer network and the internet.

27. Key frames

Also known as I-Frames, key frames are completely processed frames of digital video. They are the largest frames to process, since there’s no prediction used to display them. Key frames are the base from which delta frames predict motion and copy objects. Within encoding settings, key frame duration is an important one to consider; in general, forcing a key frame every two seconds is optimal for live streaming.

28. Live support

A feature within the event setup, live support lets viewers chat with a member of the support team to troubleshoot issues regarding the setup process — or during a specific event.

29. Lower thirds

Lower thirds are a media term for graphical elements on the bottom of the video. These are overlaid on top of a stream through a switcher (e.g., the title of the person on camera).

30. Monitoring

Monitoring is when you watch a live stream as if you’re a member of the audience. This is usually done from the production area during streaming to be sure the event isn’t experiencing any technical problems from the viewer’s perspective. Monitors can refer to the screens themselves, as well as the people watching them.

31. Natural sound

Natural sound —also known as nat sound, white noise, or background noise — is naturally-occurring audio that can be a potential obstacle in live streaming. Luckily, it’s fairly easy to manage. Live producers will usually listen for natural sound in their monitors before anything else to confirm that audio is being properly recorded. The wind is a good indicator of natural sound at an outdoor event, as is the dull roar of a crowd at a sporting event.

32. Redundancy

Redundancy refers to a variety of backup techniques during a live stream. A fully redundant live set includes backup camera feeds, backup data source streams coming out of the mixer, backup RTMP streams, backup signal types being sent, backup encoders for each master feed, backup streams or profiles for playback, and backup CDNs that each set of streams are being served from.

33. Real-time messaging protocol (RTMP)

Real-time messaging protocol is the media streaming protocol used for live streaming from a broadcaster to the Vimeo Live backend. Developed by Adobe, this protocol is used to transfer video over the internet. It’s the web address that provides the path for data to be passed from the broadcast software to the streaming server.

34. Real-time messaging protocol encrypted (RTMPE)

Real-time messaging protocol encrypted (RTMPE) is the encrypted media streaming system based off RTMP.

35. Satellite

Satellite is a fast, reliable (albeit expensive) solution used to transmit a stream from the event source to the encoder destination. For large-scale events, live stream production crews will rent a satellite for distribution; satellite is also often used in remote locations, or as a redundant source (with fiber) for the largest-scale events. Note that bad weather negatively affects satellite signal strength.

36. Shadow ban

Shadow ban is the process of blocking a user from a chat. When employed, the user can still continue to post, but none of their messages appear to other users. This feature is to combat those who abuse the chat feature, or become obstructive to the chat or the event itself.

37. Simulcast

A simulcast is any live event that’s streamed on multiple platforms simultaneously. Simulcasted live streams include content that’s airing live on television, in addition to digital platforms. They can be exclusive to digital platforms, like Vimeo.

38. Slate

Slate is an image or short video clip that’s deployed in broadcast software to “cover up” a live stream for a certain amount of time. They’re called slates because they’re the digital equivalent of the painted slates of wood used to display messages in the early days of film. Some common slate messages are, “We’ll be right back,” “We’re experiencing technical difficulties,” and “Thanks for watching!” While a slate is deployed, a broadcaster is free to adjust settings, troubleshoot, or even take a restroom break without the viewers knowing. For streams simulcasted from television, slates are often used to cover up commercial breaks that are unauthorized to be streamed online.

39. Software encoder

A software encoder is a software program that encodes and broadcasts live video. There are many types of encoders, but all are programs installed on a computer. They can be customized based on the specifications of the machine they’re running on.

40. Streaming server

A streaming server is a machine that receives the stream and performs certain processes like encoding or compression, then transmits the packets of data to the player where viewers can watch.

41. Stream key

A stream key is an alphanumeric key that allows encoding software to identify and communicate with the streaming server.

42. Switcher

Also known as a “video mixer” or “vision mixer,” a switcher is a device used to select between several different video sources. In some cases, switchers can be used for compositing (mixing) video sources together to create special effects.

43. Uplink

Uplink is the connection from a production truck to the satellite for the master live stream.4

44. Video artifacting

Video artifacting is a specific type of pixelation where objects in a video appear distorted. This differs from regular pixelation because only certain objects or portions of the screen will appear pixilated, versus the entire frame. Video artifacting often indicates a problem with compression settings.Start live streaming | Learn more

If you’re interested in live streaming, you may also want to learn more about how you can use Vimeo before your video gets to the Vimeo video player, you can also leverage Vimeo's editing tools like the video trimmer, video merger, video compressor, video cropping tool, GIF maker, and more.